And the wheel turns

When the Arduino platform became somewhat popular somewhere 2006-2007 ish, I was hooked and I actually cut my teeth on a couple of super over ambitious projects like sequencers and midi controllers. I did manage to make a couple of cool things, including a motion sensor controlled instrument (mostly built in PureData) that was driven by Kids jumping up and down on a bungee trampoline.

Over the following years, my interests shifted elsewhere and apart from a couple of failed attempts to build a somewhat universal sync box (which is still somewhere on my todo list) between MIDI, the Korg Volca sync and Nanoloop, I had a bunch of electronics lying around in my living rooms drawers (and now in my studio that are unused and slowly go the way of all things in the universe.

My interest in electronics was (more or less out of specific needs) revived when I got my first 3d printer, but the applications where simple and well documented.

And so I more or less completely missed how the Arduino world had evolved since those early years. I loosely followed the developments, and so I knew about things like MicroPython, CircuitPython and also, to compile modern versions of my 3D printer's firmware, I started to use Platformio. But all of that was rather superficial.

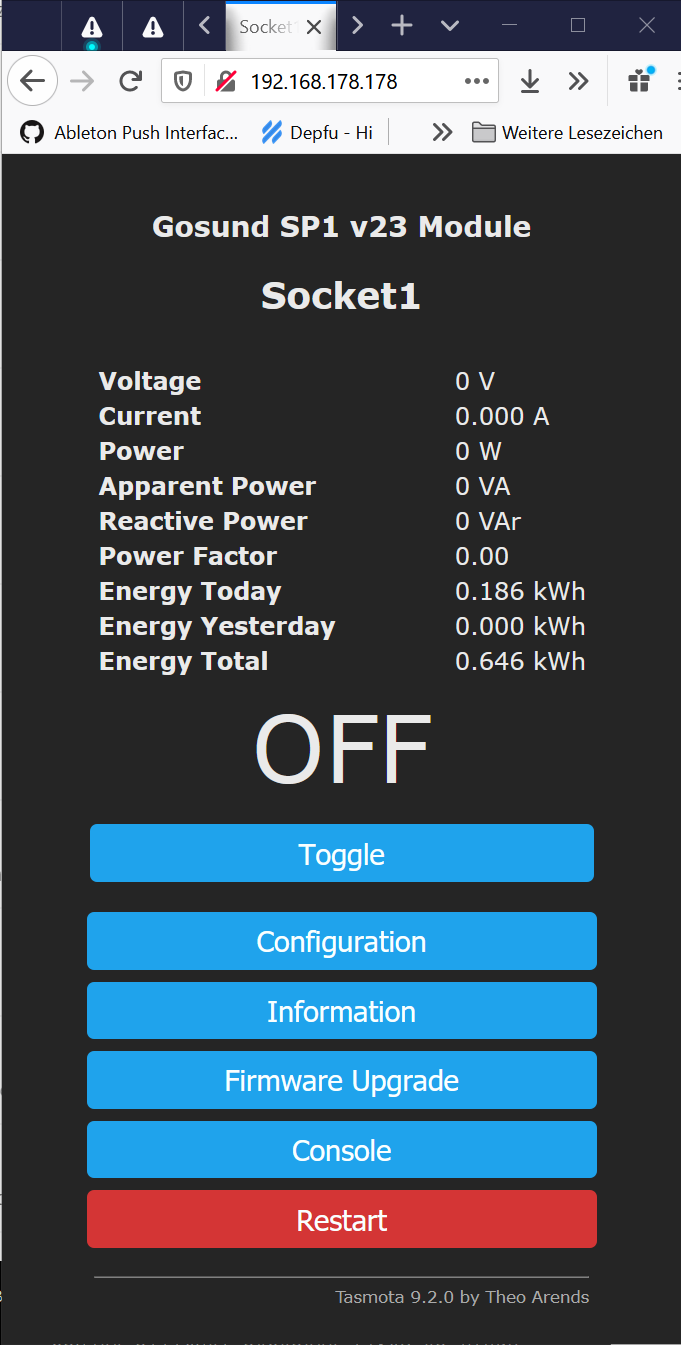

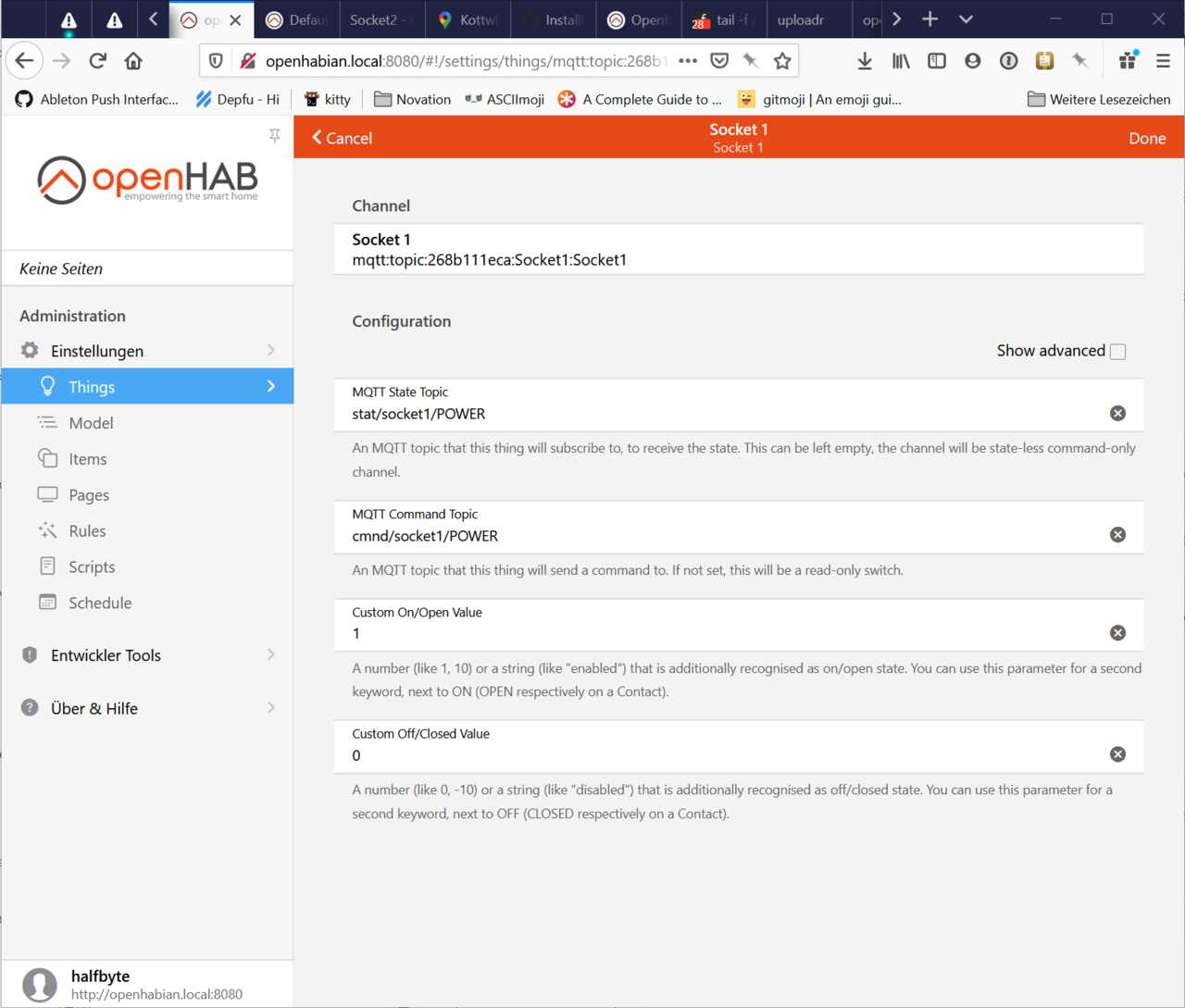

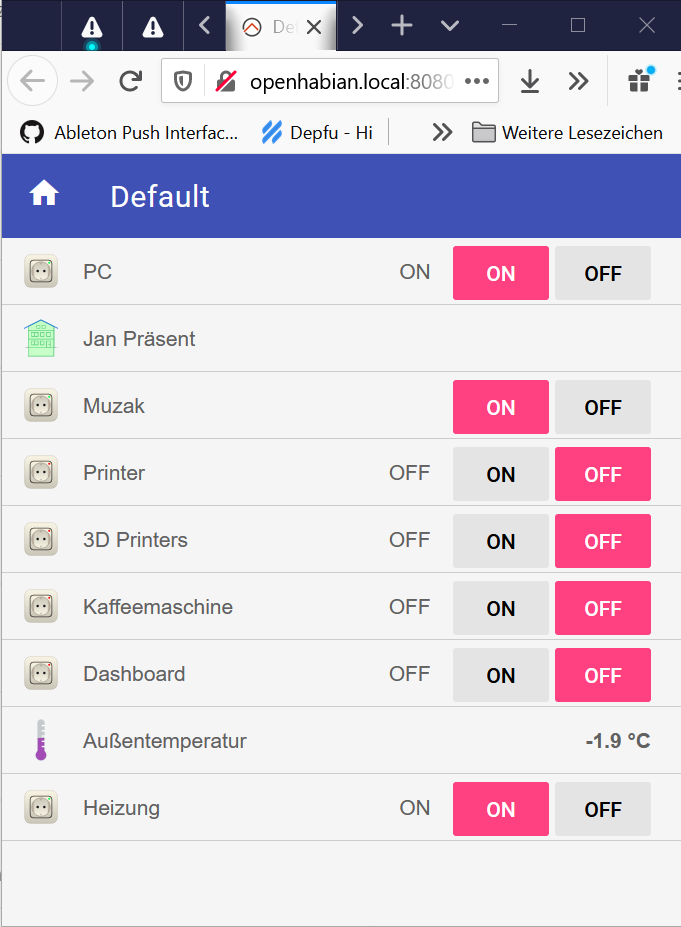

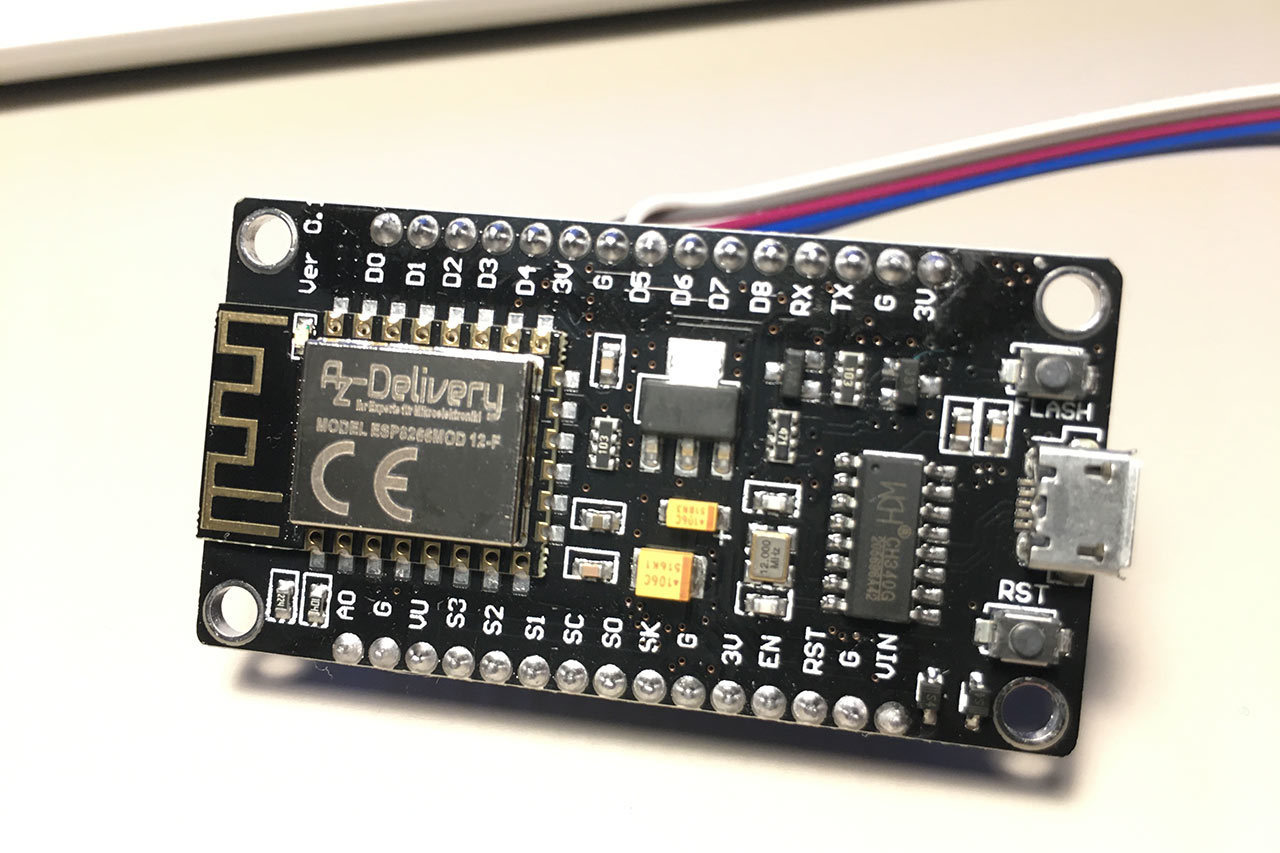

Fast forward to this year and due to my experiments with home automation, I stumbled across the world of ESP8266/ESP32 boards. The ESP8266 (and the follow up, the ESP32 family) is a microcontroller essentially made for the modern IOT world. It contains a Wifi stack (ESP32's also do BLE) and is powerful enough to serve simple web interfaces, do TLS connections (even though full TLS security is a bit challenging on these devices) and such.

And the most fascinating thing for me as a maker, these devices are super cheap. A fully featured dev board, like the NodeMCU units or the D1 mini cost around 6 EUR when sourced in germany and can be as cheap as 3 EUR when you can stand the nervewracking shipping from china and the follwing discussions with a customs officer.

And now suddenly my interest in all of these things is reinvigorated. Back then, building an internet connected thing (which, you know, is somewhat interesting if you are a web developer in your normal life) was not only quite difficult (Cheap wifi chips were not really a thing back then and ethernet adapters were available, but that makes a sensor or something like that immediatly 100x more clunky) but also a lot more expensive. Who knew that almost 15 years of technological advancement would have such an enormous effect!

So far I really haven't gotten that far in my experiments, so not much to document here but one thing I noticed is how much bigger this whole ecosystem has become. Also kind of a no-brainer, of course, but to me, being more or less away from this scene for a couple of years, the contrast is stark.

To me this boils down to a couple of points.

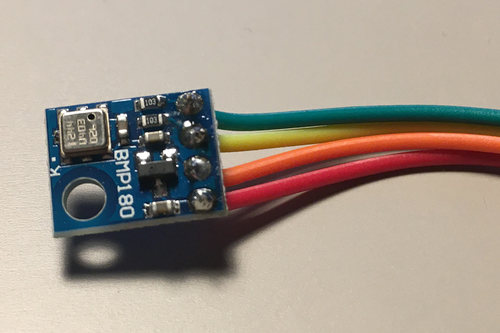

You can buy a lot more stuff more easily and also in way more accessible packages. With the still ongoing miniturisation of all things electronics, there's a ton of components that's essentially inaccessible to a noob maker like me who has no skills (and not a particularly great interest) in designing circuit boards. Take the BMP180 temperature and pressure sensor from Bosch for example. That whole thing is probably 4x3 mm or so. Without the adapter board someone designed and decided to mass produce you can see in the picture, it would be completely useless to me. Now, you can buy these ready made boards for about 3-5 EUR (again, depending on your sourcing habits and quantities you buy) and you can take a couple of DuPont wires and connect them to your NodeMCU and with some software and a USB power supply you have a temperature sensor you can run wherever there's a power socket.

The library eco system for Arduino etc. exploded. There's not a lot of useful components out there for which you would not find an arduino library. When I started to play with my first Arduinos, one of the first things I tried was interfacing with a DOGM display, which not only looked cooler than the typical LCD displays but also needed less wires to operate as they used SPI for communication instead of the weird parallel protocol most text displays use – but there wasn't a library for it, so I had to do it myself – It's still available on GitHub but today of course there's a fully featured library available directly from the Arduino IDE.

These two points combined actually mean that “the maker” is now a veritable market in itself. Back when I started, only a couple of online shops carried Arduino and compatible things. Nowadays, you can buy stuff from Amazon, you can buy stuff directly from chinese vendors at Aliexpress or Banggood or you can order at one of the many specialised online shops. And everything comes with references to the specific Arduino libraries or board definitions – You don't buy stuff that “works with Arduino”, you buy stuff that is specifically made for this particular ecosystem.

I did notice this already when i started to get into 3d printers, though. Suddenly, after a few searches, my internet was full of 3d printer driver boards, heating beds and matching surfaces, mechanical parts, stepper motors and so on, definitely stuff that wasn't as readily available when I first looked into this (roughly at the same time as my arduino journey started).

I guess, part of that is that the makersphere in itself got so much bigger and became “a market” to serve. But part of that is, I guess, also individual efforts to make the whole world of electronics so much more accessible – My feeling is that the “old school” electronics geeks don't particularly like this, in the same way that greybeards running unix clusters front agains those modern JavaScript hustlers.

Of course, there's the original Arduino founders to mention who really sparked this whole movement, but over the course of the last few years I don't think you should underestimate the influence that Limor “ladyada” Fried has had with her Adafruit empire. The amount of influencial hardware designs (like the Feather series of boards) and software initiatives like CircuitPython coming out of the Adafruit lair is just impressive.

The next evolutionary step was that hardware vendors started to understand and leverage that new market. Microchip, owner of Atmel, and thus the main supplier of ATMega chips you'll find on most of the Arduino boards, now has a much closer relationship to hobbyists. Expressif, the manufacturer of the ESP chips actually maintains the Arduino board SDK for the ESP32 and while I haven't looked I'm pretty sure there are examples of component manufacturers maintaining Arduino libraries for their components.

This all has a pretty weird effect on me though. Part of the fun of adapting the DOGM display back then was having to actually read data sheets and trying to get it working. In 2021, most of that work has probably been done for you and all there is left to do is copy and paste a couple of lines from an example file and adapt them to your need. If your need is to get up and running quickly and solve a problem you wanted to solve, that's great, but sometimes, the journey is the fun part – It will be interesting to see how this will influence my own motivation over the next few experiments.

I do think though, from a 3km perspective (See what I did there?) this change overall is fantastic for makers and the wide availability and accessibility of incredible hardware of all sizes and shapes is great and makes for a lot of fun.